FAQ: Over Provisioning aka Thin Provisioning in ONTAP

Applies To

Over Provisioning/Thin Provisioning

Answer

- Over provisioning, also known as thin provisioning, provides storage administrators the ability to provision more storage space than is available in the containing aggregate.

- ONTAP provides two types of thin provisioning capabilities

- Volume thin provisioning

- LUN thin provisioning

Thin provisioning for volumes

- When a thinly provisioned volume is created, ONTAP does not reserve any extra space when the volume is created.

- As data is written to the volume, the volume requests the storage it needs from the aggregate to accommodate the write operation.

- Using thin-provisioned volumes enables you to over provision your aggregate, which introduces the possibility of the volume not being able to secure the space it needs if the aggregate runs out of free space.

- You create a thin-provisioned volume by setting its space guarantee to none.

- With a space guarantee of none, the volume size is not limited by the aggregate size.

- In fact, each volume could, if required, be larger than the containing aggregate.

- The storage provided by the aggregate is used only as data is written to the volume.

Thin Provisioning for LUNs

- Thin provisioning enables storage administrators to provision more storage on a LUN than is currently available on the volume.

- Users often do not consume all the space they request, which reduces storage efficiency if space-reserved LUNs are used.

- By over-provisioning the volume, storage administrators can increase the capacity utilization of that volume.

- When a new thinly provisioned LUN is created, it consumes almost no space from the containing volume. As blocks are written to the LUN and space within the LUN is consumed, an equal amount of space within the containing volume is consumed.

- With thin provisioning, you can present more storage space to the hosts connecting to the storage controller than is actually available on the storage controller.

- Storage provisioning with thinly provisioned LUNs enables storage administrators to provide users with the storage they need at any given time.

The advantages of thin provisioning are as follows:

- Provides better storage efficiency.

- Allows free space to be shared between LUNs.

- Enables LUNs to consume only the space they actually use.

Example:

Below is an example of a volume with thinly provisioned LUNs - an administrator can provision a 4,000-GB volume with five thinly provisioned LUNs with 1,000 GB of space for each LUN as shown in the following table:

Table 1. Thinly provisioned LUNs on a 4,000-GB volume

|

LUN name |

Space actually used by the LUN |

Configured space available to the LUN |

|---|---|---|

|

lun1 |

100 GB |

1,000 GB |

|

lun2 |

100 GB |

1,000 GB |

|

lun3 |

100 GB |

1,000 GB |

|

lun4 |

100 GB |

1,000 GB |

|

lun5 |

100 GB |

1,000 GB |

|

Totals |

500 GB |

5,000 GB |

- All 5 LUNs use 100 GB of storage, but each LUN has the possibility of using 1,000 GB of storage.

- In this configuration, the volume is overprovisioned by 1,000 GB, but because the actual space used by the LUNs is 500 GB, the volume still has 3,500 GB available space.

- Thin provisioning allows LUNs to grow at different rates.

- From the pool of available space, a LUN can grow as blocks of data are written to that LUN.

- If all the LUNs used all their configured space, then the volume would run out of free space.

- The storage administrator needs to monitor the storage controller and increase the size of the volume as needed.

You can have thinly provisioned and space-reserved LUNs on the same volume and the same aggregate. For example, you can use space-reserved LUNs for critical production applications, and thin provisioned LUNs for other types of applications.

Over Provisioning

- As a result of being able to create logical containers (volumes or LUNs) whose combined size is larger than the aggregate, it is a common practice to offer more logical space to hosts than is available in the underlying physical storage.

- This results in an aggregate that is over provisioned.

When the aggregate is overprovisioned, it is possible for these types of writes to fail due to lack of available space:

- Writes to any volume with space guarantee of none.

- Writes to any file that does not have space reservations enabled and that is in a volume with space guarantee of file.

Therefore, if you have over provisioned your aggregate, you must monitor your available space, and add storage to the aggregate as needed to avoid write errors due to insufficient space.

| LUNs in this context refer to the LUNs that Data ONTAP serves to clients, not to the array LUNs that are used for storage on a storage array. |

Note: The aggregate must provide enough free space to hold the metadata for each volume it contains. The space required for a volume's metadata is approximately 0.5 percent of the volume's nominal size.

How to view if a LUN is Thin Provisioned:

To check if a LUN has been thin provisioned, run the following command and take note of the highlighted output:

cluster1::> lun show -vserver vs1 -path /vol/vol1/lun1 -instance

Vserver Name: vs1

LUN Path: /vol/vol1/lun1

Volume Name: vol1

Qtree Name: ""

LUN Name: lun1

LUN Size: 10MB

OS Type: linux

Space Reservation: disabled

Serial Number: wCVt1]IlvQWv

Serial Number (Hex): 77435674315d496c76515776

Comment: new comment

Space Reservations Honored: false

Space Allocation: disabled

State: offline

LUN UUID: 76d2eba4-dd3f-494c-ad63-1995c1574753

Mapped: mapped

Block Size: 512

The options highlighted above are further explained below:

- [-space-reserve {enabled|disabled}] - Space Reservation - Selects the LUNs that match this parameter value. If true, the LUN is space-reserved. If false, the LUN is thinly provisioned. The default is true.

- [-space-reserve-honored {true|false}] - Space Reservations Honored - Selects the LUNs that match this parameter value. A value of true displays the LUNs that have space reservation honored by the container volume. A value of false displays the LUNs that are thinly provisioned.

- [-space-allocation {enabled|disabled}] - Space Allocation - Selects the LUNs that match this parameter value. If you set this parameter to enabled, space allocation is enabled and provisioning threshold events for the LUN are reported. If you set this parameter to disabled, space allocation is not enabled and provisioning threshold events for the LUN are not reported.

| When using thin provisioning please keep track of the space used by the LUN to avoid running out of space in the volume. |

How to view if a Volume is Thin Provisioned:

- To check if a Volume has been thin provisioned, run the following command and take note of the highlighted output:

cluster1::*> volume show -vserver vs1 -volume vol1

Vserver Name: vs1

Volume Name: vol1

Aggregate Name: aggr1

Volume Size: 30MB

Volume Data Set ID: 1026

Volume Master Data Set ID: 2147484674

Volume State: online

Volume Type: RW

Volume Style: flex

Is Cluster Volume: true

Is Constituent Volume: false

Export Policy: default

User ID: root

Group ID: daemon

Security Style: mixed

Unix Permissions: ---rwx------

Junction Path: -

Junction Path Source: -

Junction Active: -

Junction Parent Volume: -

Comment:

Available Size: 23.20MB

Filesystem Size: 30MB

Total User-Visible Size: 28.50MB

Used Size: 5.30MB

Used Percentage: 22%

Volume Nearly Full Threshold Percent: 95%

Volume Full Threshold Percent: 98%

Maximum Autosize (for flexvols only): 8.40GB

Minimum Autosize: 30MB

Autosize Grow Threshold Percentage: 85%

Autosize Shrink Threshold Percentage: 50%

Autosize Mode: off

Autosize Enabled (for flexvols only): false

Total Files (for user-visible data): 217894

Files Used (for user-visible data): 98

Space Guarantee Style: none

Space Guarantee In Effect: true

Snapshot Directory Access Enabled: true

Space Reserved for Snapshot Copies: 5%

Snapshot Reserve Used: 98%

Snapshot Policy: default

Creation Time: Mon Jul 08 10:54:32 2013

Language: C.UTF-8

Clone Volume: false

Node name: cluster-1-01

The options highlighted above are further explained below:

- [-size {<integer>[KB|MB|GB|TB|PB]}] - Volume Size

If this parameter is specified, the command displays information only about the volume or volumes that have the specified size. Size is the maximum amount of space a volume can consume from its associated aggregate(s), including user data, metadata, Snapshot copies, and Snapshot reserve. Note that for volumes without a -space-guarantee of volume, the ability to fill the volume to this maximum size depends on the space available in the associated aggregate or aggregates. - [-space-guarantee-enabled {true|false}] - Space Guarantee in Effect - If this parameter is specified, the command displays information only about the volume or volumes that have the specified space-guarantee setting. If the value of -space-guarantee is none, the value of -space-guarantee-enabled is always true. In other words, because there is no guarantee, the guarantee is always in effect. If the value of -space-guarantee is volume, the value of -space-guarantee-enabled can be true or false, depending on whether the guaranteed amount of space was available when the volume was mounted.

- [-space-guarantee | -s {none|volume}] - Space Guarantee Style - If this parameter is specified, the command displays information only about the volume or volumes that have the specified space guarantee style. If the value of -space-guarantee is none, the value of -space-guarantee-enabled is always true. In other words, because there is no guarantee, the guarantee is always in effect. If the value of -space-guarantee is volume, the value of -space-guarantee-enabled can be true or false, depending on whether the guaranteed amount of space was available when the volume was mounted.

How to view a Volumes Storage Footprint

You can also use the volume show-footprint command to check how much total space the volume is using in the aggregate.

cluster1::> volume show-footprint

Vserver : nodevs

Volume : vol0

Feature Used Used%

-------------------------------- ---------- -----

Volume Data Footprint 103.1MB 11%

Volume Guarantee 743.6MB 83%

Flexible Volume Metadata 4.84MB 1%

Delayed Frees 4.82MB 1%

Total Footprint 856.3MB 95%

Note: The above example shows a thick provisioned volume – if the volume is thin provisioned, the volume guarantee will be zero.

- The total footprint value shows the amount of space that’s being used by the volume in the aggregate.

- You should keep track of these numbers, especially when using thin provisioning, to know how much space is being consumed by the volume in the aggregate and to make changes accordingly.

- The last step in keeping track with storage space usage, when using thin provisioning for volumes and LUNS, is to track how much space is being used in the aggregate in order to avoid running out of space.

How to view an Aggregates overall storage usage

The following command can be used to track space usage in an aggregate:

cluster1::> storage aggregate show-space

Aggregate : wqa_gx106_aggr1

Feature Used Used%

-------------------------------- ---------- ------

Volume Footprints 101.0MB 0%

Aggregate Metadata 300KB 0%

Snapshot Reserve 5.98GB 5%

Total Used 6.07GB 5%

Total Physical Used 34.82KB 0%

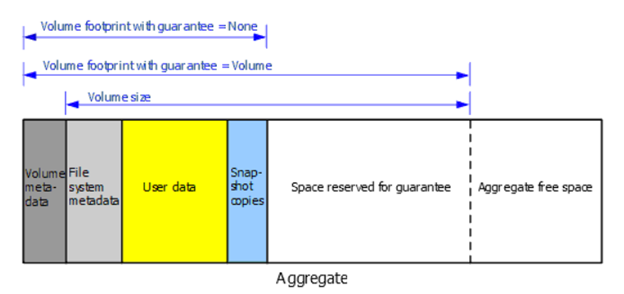

Visual representation of storage used with an Aggregate

- Volume Footprints – shows the total of all volume footprints within the aggregate. It includes all of the space that is used or reserved by all data and metadata of all volumes in the containing aggregate as shown in the diagram below:

- Total Used – the sum of all space used or reserved in the aggregate by volumes, metadata, or Snapshot copies.

- Physical Used – the amount of space being used for data now (rather than being reserved for future use). Includes space used by aggregate Snapshot copies.

Space Management

- The storage administrator needs to monitor the Cluster and increase the size of the aggregate as needed in order to avoid running out of space.

- Methods to create space in an aggregate

Additional Information

For further information, please refer to the following documentation: